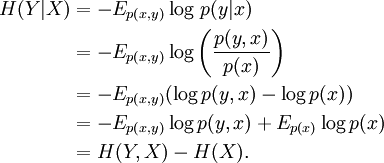

V-Measure: A Conditional Entropy-Based External Cluster Evaluation Measure. Also, my ultimate goal is to also get IG based on a MVN model of more than one trait (i.e., stature and weight). Cite (ACL):: Andrew Rosenberg and Julia Hirschberg. I assume I’d have to include the target somewhere. Further, X here is not the target variable from above, it is a covariate age. Here I include a VERY general model that models stature as a normal distribution with a mean function and sd function. Either extracting the results OR possibly embedded in generated quantities? Math aside, does anyone have any suggestions on how the Stan log probability density can be used here. Given stature is continuous, entropy is equal to -\int_ f(x,y)logf(x|y) dxdy. Import the x and y components of the Henon system of equations and create a. Based on the measure, a heuristic attribute reduction algorithm is. Example 9: Hierarchical Multiscale corrected Cross-Conditional Entropy. To be precise IG = H(x) - H(x|y) or the entropy of the target variable minus the conditional entropy of x given y. By using the new conditional entropy, we propose a measure for attribute importance. My ultimate goal is to learn the information gain i.e., how much more certain are we of an individual’s country of origin given their stature. Here’s the background - I have a feature vector of response variables y that is say a measurement of an individual’s stature and my target or conditioning variable say x is the country that individual comes from. I have a question related to the application of Stan results to downstream information theoretic metrics including entropy, conditional entropy, and information gain.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed